The battle scar

The first time we tried to make “human-in-the-loop” real, we did what every reasonable team does:

“Let’s just route the AI output to a reviewer. If it looks good, they approve it.”

On day one it felt safe.

On day seven, the review queue turned into a landfill.

- Reviewers were drowning in low-value approvals (“yep, this is fine”).

- The real exceptions were getting buried.

- And the team started quietly asking the dangerous question:

“Can we turn review off for a bit?”

That’s when you learn the truth:

In high-volume operations, “review everything” is functionally the same as “ship nothing.”

The lie: “We’ll just review everything”

When you review everything, one of three things happens:

- you ship nothing,

- you burn out the reviewers,

- you quietly turn review off.

And in regulated environments, the third outcome is how you end up with a compliance incident and a credibility problem.

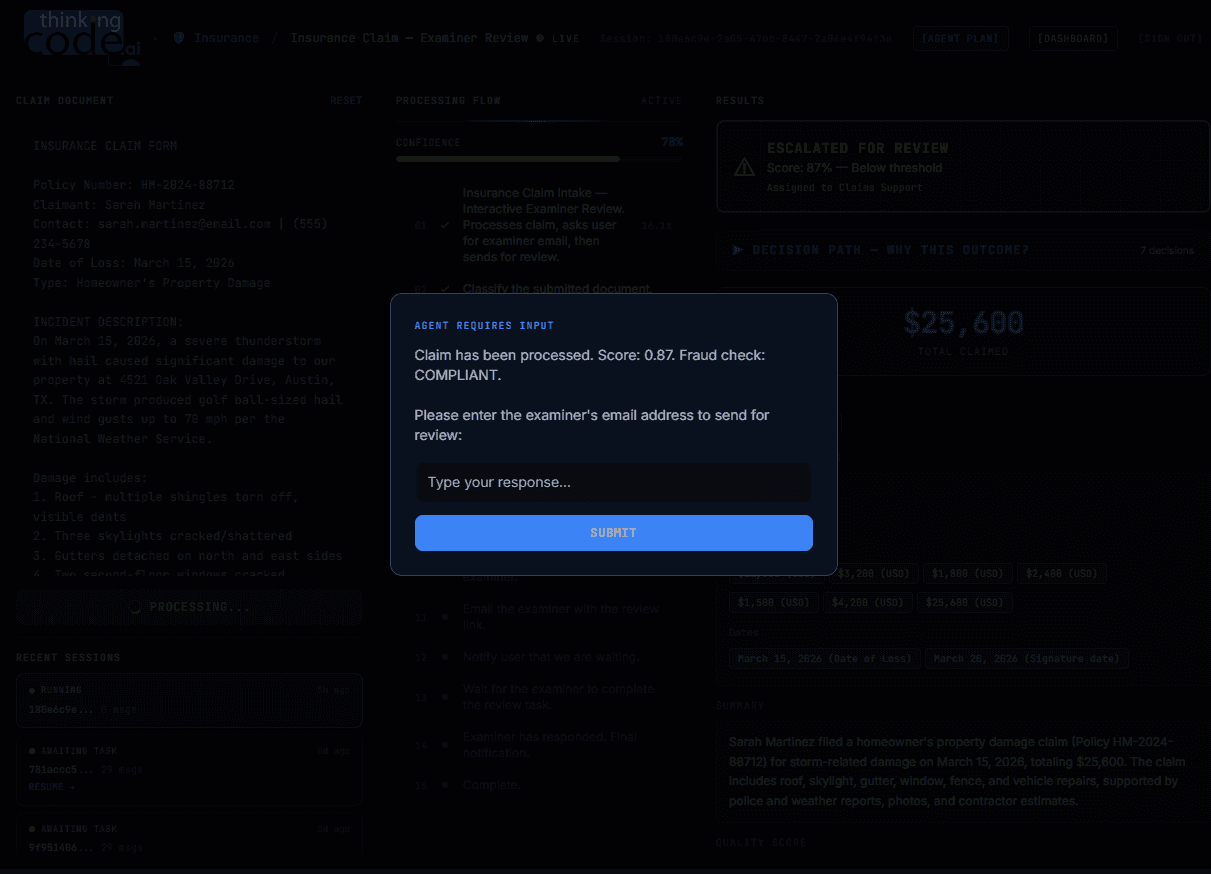

The pattern that works: review as a policy gate

Review isn’t a feature. It’s a policy decision point.

We design review like a gate with explicit rules:

- Auto-approve only when the action is low-risk and confidence is high.

- Escalate when policy requires judgment or when the model is uncertain.

- Block when inputs are missing, conflicting, or non-compliant.

This is the difference between “human-in-the-loop” as a slogan and “human-in-the-loop” as a system.

The gates we use (in real workflows)

These are the ones that show up again and again in insurance/healthcare/PBM operations.

1) Required-field gate

If core identifiers are missing, don’t proceed.

Example: missing member ID, conflicting DOB, missing provider NPI, incomplete claim header.

This avoids the worst kind of automation: confidently doing the wrong thing.

2) Confidence gate (but not the naive one)

“Confidence < threshold → review” is fine.

The battle scar is that one threshold rarely works across steps.

- Extraction confidence for member ID should be stricter than extraction confidence for plan name.

- A summarization step may tolerate lower confidence because it’s reviewer-facing.

So we use per-field / per-step thresholds, and we log them.

3) Impact gate

Anything that can materially affect a person or money gets review:

- financial decisions

- adverse outcomes

- coverage denials

- clinical implications

Even if the model is “confident”.

4) Novelty / out-of-distribution gate

The model is least reliable exactly when you need it most: weird edge cases.

We route novelty to experts by looking for:

- unseen document types

- new form templates

- unusual value ranges

- changed payer/provider rules

The overlooked constraint: reviewer load is the system

Most HITL implementations fail because nobody models reviewer throughput.

A simple way to start:

- incoming cases/day

- % expected to hit review

- average review time

- reviewers available

If you don’t do this math up front, you’re “designing” with vibes.

A practical target

In many workflows, a good first milestone is:

- >70% low-risk steps automated end-to-end

- <30% routed to human review

- <5% routed to SME escalation

(Exact numbers depend on risk tolerance, case mix, and policy.)

Escalation ladder (keep it boring and explicit)

We keep escalation simple:

- Ops reviewer (fast, procedural)

- SME reviewer (rare, high context)

- Compliance sign-off (defined path, very rare)

The important part isn’t the ladder — it’s that everyone knows what triggers each rung.

Evidence: why “looks fine” isn’t enough

If a reviewer approves something, we want to know:

- what source document it came from

- what fields were extracted

- what policy gates passed/failed

- what the reviewer changed

This is not about surveillance — it’s about being able to answer:

“Why did the system do that?”

…three months later, when nobody remembers.

The goal

Humans shouldn’t babysit the model.

They should handle the decisions that truly require judgment.

And the system should make that judgment easy: clear gates, clean evidence, and a queue that stays humane.